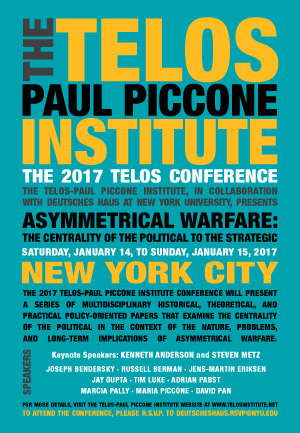

An earlier version of the following paper was presented at the 2017 Telos Conference, held on January 14–15, 2017, in New York City. For additional details about the conference as well as other upcoming events, please visit the Telos-Paul Piccone Institute website.

Recent initiatives to utilize household and personal mobile technologies to further specific security, surveillance, and counter-terrorism objectives pose significant challenges to civil liberties and personal well-being. The social and political statuses of these technological systems are just emerging: they are rapidly being infused into home settings and mobile devices, apparently under the control of users but under at least the partial monitoring and operation of various governmental and corporate entities (Howard, 2015; Hahn, 2017). Individuals are being increasingly surveilled by sets of security-related mechanisms in their home automation and mobile communications devices as well as by other manifestations of the “Internet of Things” (IoT). Tankard (2015) states that IoT “is a vision whereby potentially billions of ‘things’—such as smart devices and sensors—are interconnected using machine-to-machine technology enabled by Internet or other IP-based connectivity” (p. 11). Watson (2015) projects that “Every device will, in the future, be a possible Smart Component on the Internet” (p. 212), which will involve a growing assortment of networked artifacts performing an even wider array of roles in everyday life. Serlin (2015) portrays how IoT technologies can play positive roles, empowering individuals with severe disabilities to perform critical functions.

Recent initiatives to utilize household and personal mobile technologies to further specific security, surveillance, and counter-terrorism objectives pose significant challenges to civil liberties and personal well-being. The social and political statuses of these technological systems are just emerging: they are rapidly being infused into home settings and mobile devices, apparently under the control of users but under at least the partial monitoring and operation of various governmental and corporate entities (Howard, 2015; Hahn, 2017). Individuals are being increasingly surveilled by sets of security-related mechanisms in their home automation and mobile communications devices as well as by other manifestations of the “Internet of Things” (IoT). Tankard (2015) states that IoT “is a vision whereby potentially billions of ‘things’—such as smart devices and sensors—are interconnected using machine-to-machine technology enabled by Internet or other IP-based connectivity” (p. 11). Watson (2015) projects that “Every device will, in the future, be a possible Smart Component on the Internet” (p. 212), which will involve a growing assortment of networked artifacts performing an even wider array of roles in everyday life. Serlin (2015) portrays how IoT technologies can play positive roles, empowering individuals with severe disabilities to perform critical functions.

Toward the “Smart Home”?

Increasing numbers of our household artifacts currently possess or will have the capability to accumulate and analyze data about home life as well as mobile interactions. Some surveillance-oriented intelligent agents are already capable of analyzing IoT-produced household data at a high level of detail, often in order to elicit information about current or potential terrorist compliance or other purported deviance; these initiatives continue a growing legacy of intimate surveillance efforts (Haggerty & Gazso, 2005; Oravec. 1996). For example, the computer components in a networked toaster or microwave oven could be used as a “zombie” in a cyberwar attack, and surveillance systems could be watchful for such a status. In the home of the near future, a majority of the items that household members obtain for the kitchen or bathroom that are connected to computer networks will have remote supervision mechanisms, including “kill switches” that provide for automatic shut-off; these capabilities may be automatically employed, for instance, if the artifacts appear to be harboring data that are problematic in terms of security interests or are possibly being used as part of a suspected cyberterrorist initiative.

Many of the devices that household participants buy and use everyday are increasingly entering a status of “joint ownership”; entities other than the purchaser and immediate physical holder of the items are gaining considerable control over (as well as significant benefits from) the items. For example, household members who buy a game console could find that software updates have modified the games they played and possibly erased some of the records they earned through their diligent gaming efforts. An individual who purchases a book to read on an e-book device could find the item missing because of various public policy concerns (such as those related to intellectual property). The very potential for the remote supervision of an artifact can in itself have psychological impacts, for example as announcements or rumors about various latent capabilities affect the character of household activities and routines. The remote supervision of computer-related entities can have especially problematic consequences in the advent of the “smart home,” which may include major appliances such as refrigerators and washing machines as well as less formidable and even disposable devices such as watches and milk cartons (Howard, 2015). Household residents may not have the technical capacity to defend themselves and their residences from emerging problems and malfunctions; control efforts from outside the home could range from simple repairs to total shutdowns in case of suspected cyberattacks.

How will individuals in their households and communities deal psychologically with potential security, autonomy, and privacy-related hazards involved with these technological changes? The awareness of individuals as to the privacy protections and security contingencies of IoT data streams can differ widely, increasing the confusion and opportunism that can accompany their use in terrorist-related investigations as well as legal proceedings involving divorce or insurance fraud. Issues also arise concerning the protected statuses of minors: how will the inputs of children be handled in terms of privacy and security? Home life can be overwhelming as it is, with extensive consumer and interpersonal demands (Oravec, 2015). The sense-making processes of individuals concerning the IoT and related technologies that are being infused into their homes, phones, and community environments may undergo rapid and disorienting shifts as system failures occur or as circumstances produce disorienting outcomes. Such technologies as drones (Rao, Gopi, & Maione, 2016) and self-driving vehicles (Stayton, 2015) are being added to the mix, providing the potential for cognitive overload in individuals attempting to maintain personal mental autonomy. These human-technology interactions also have the potential for forms of “gaslighting,” with strategies that opportunistically induce and subsequently take advantage of users’ anxieties and misapprehensions. For example, gaslighting can be used as part of social engineering efforts to make household members more compliant with IoT system objectives. The origins of the term are tightly coupled with the 1938 play Gas Light (as well as a 1944 film entitled Gaslight, starring Ingrid Bergman), in which various environmental and psychological methods were used to distort a young woman’s sense of reality along with her capacity for autonomous decision making (Calef & Weinshel, 1981).

Asymmetries and Security in the Smart Environment

The counter-terrorist and security-related mechanisms that are emerging in our everyday environments present an assortment of asymmetries in their technological and social configurations; many will have the capability of disabling critical personal and domestic functions, possibly on the basis of “false positive” readings as they attempt to detect security violations. In order to engage in many aspects of their commercial and social interactions, individuals will be harboring (possibly “quartering”) intelligent agents in their homes and on their persons. These technological initiatives can have broad impacts on civil liberties and everyday life. In situations in which U.S. governmental activity is involved, the Fourth Amendment of the U.S. Constitution provides some legal protections against “unwarranted searches and seizures,” which have been generally interpreted in the courts as encompassing computing and control technologies (Oravec, 2003, 2004). The Third Amendment could also have some applicability as various intelligent agents are being effectively quartered in our households just as flesh-and-blood human soldiers were in past centuries. The Amendment reads: “No Soldier shall, in time of peace be quartered in any house, without the consent of the Owner, nor in time of war, but in a manner to be prescribed by law.” The late U.S. Supreme Court Justice William O. Douglas used the Third Amendment in his efforts to uphold household privacy (Reynolds, 2015).

The characters of the IoT regulations and oversight that will develop in the decades to come are uncertain: recent IoT initiatives have yet to meet with substantial levels of scrutiny on the part of governmental agencies in the United States and the United Kingdom. Frieden (2016) declares that “Currently IoT test and demonstration projects operate largely free of government oversight in an atmosphere that promotes innovation free of having to secure public, or private approval” (p. 1). Efforts to balance household privacy and autonomy with other compelling social and political ends could be difficult in IoT-related cases as they have apparently been in many other information technology and telecommunications settings. In order to delineate individuals’ civil liberties in this arena, continued attention to emerging IoT research and development initiatives is essential as well as maintained engagement in the public policy arena.

Some References and Additional Sources

Calef, V., & Weinshel, E. M. (1981). Some clinical consequences of introjection: gaslighting. The Psychoanalytic Quarterly, 50(1), 44–66.

Frieden, R. (2016). Building trust in the Internet of Things. Available at SSRN 2754612

Haggerty, K. D., & Gazso, A. (2005). Seeing beyond the ruins: Surveillance as a response to terrorist threats. The Canadian Journal of Sociology, 30(2), 169–187.

Hahn, J. (2017). Security and privacy for location services and the Internet of Things. Library Technology Reports, 53 (1), 23-28.

Howard, P. N. (2015). Pax Technica: How the Internet of things may set us free or lock us up. Yale University Press.

Kijewski, P., Jaroszewski, P., Urbanowicz, J. A., & Armin, J. (2016). The never-ending game of cyberattack attribution. In Combatting Cybercrime and Cyberterrorism (pp. 175–192). New York: Springer International Publishing.

Oravec, J. A. (1996). Virtual individuals, virtual groups: Human dimensions of groupware and computer networking. Cambridge University Press.

Oravec, J. A. (2003). The transformation of privacy and anonymity: Beyond the right to be let alone. Sociological imagination, 39(1), 3–23.

Oravec, J. A. (2004). 1984 and September 11: Surveillance and corporate responsibility. New Academy Review, 1(3) (Cambridge University).

Oravec, J. A. (2015). Depraved, distracted, disabled, or just “pack rats”? Workplace hoarding persona in physical and virtual realms. Persona Studies, 1(2), 75–87.

Rao, B., Gopi, A. G., & Maione, R. (2016). The societal impact of commercial drones. Technology in Society, 45, 83–90.

Reynolds, G. H. (2015). Third Amendment penumbras: Some preliminary observations. Tennessee Law Review, 82, 557–567.

Serlin, D. (2015). Constructing autonomy: Smart homes for disabled veterans and the politics of normative citizenship. Critical Military Studies, 1(1), 38–46.

Stayton, E. L. (2015). Driverless dreams: Technological narratives and the shape of the automated car (Doctoral dissertation, Massachusetts Institute of Technology).

Tankard, C. (2015). The security issues of the Internet of Things. Computer Fraud & Security, 9, 11–14.

Watson, D. L. (2015, September). Some security perils of smart living. In International Conference on Global Security, Safety, and Sustainability (pp. 211–227). New York: Springer International Publishing.